Picture generated with Ideogram.ai

I’m certain that the majority of us have used serps.

There’s even a phrase akin to “Just Google it.” The phrase means you need to seek for the reply utilizing Google’s search engine. That’s how common Google can now be recognized as a search engine.

Why search engine is so helpful? Engines like google enable customers to simply purchase info on the web utilizing restricted question enter and manage that info based mostly on relevance and high quality. In flip, search allows accessibility to huge data that was beforehand inaccessible.

Historically, the search engine method to discovering info relies on lexical matches or phrase matching. It really works properly, however typically, the end result may very well be extra correct as a result of the consumer intention differs from the enter textual content.

For instance, the enter “Red Dress Shot in the Dark” can have a double that means, particularly with the phrase “Shot.” The extra possible that means is that the Pink Gown image is taken at midnight, however conventional serps wouldn’t perceive it. That’s why Semantic Search is rising.

Semantic search may very well be outlined as a search engine that considers the that means of phrases and sentences. The semantic search output could be info that matches the question that means, which contrasts with a conventional search that matches the question with phrases.

Within the NLP (Pure Language Processing) area, vector databases have considerably improved semantic search capabilities by using the storage, indexing, and retrieval of high-dimensional vectors representing textual content’s that means. So, semantic search and vector databases have been carefully associated fields.

This text will focus on semantic search and find out how to use a Vector Database. With that in thoughts, let’s get into it.

Let’s focus on Semantic Search within the context of Vector Databases.

Semantic search concepts are based mostly on the meanings of the textual content, however how might we seize that info? A pc can’t have a sense or data like people do, which suggests the phrase “meanings” must check with one thing else. Within the semantic search, the phrase “meaning” would turn into a illustration of data that’s appropriate for significant retrieval.

The that means illustration comes as Embedding, the textual content transformation course of right into a Vector with numerical info. For instance, we are able to remodel the sentence “I want to learn about Semantic Search” utilizing the OpenAI Embedding mannequin.

[-0.027598874643445015, 0.005403674207627773, -0.03200408071279526, -0.0026835924945771694, -0.01792600005865097,...]

How is that this numerical vector capable of seize the meanings, then? Let’s take a step again. The end result you see above is the embedding results of the sentence. The embedding output could be completely different in the event you changed even only one phrase within the above sentence. Even a single phrase would have a special embedding output as properly.

If we take a look at the entire image, embeddings for a single phrase versus an entire sentence will differ considerably as a result of sentence embeddings account for relationships between phrases and the sentence’s general that means, which isn’t captured within the particular person phrase embeddings. It means every phrase, sentence, and textual content is exclusive in its embedding end result. That is how embedding might seize that means as a substitute of lexical matching.

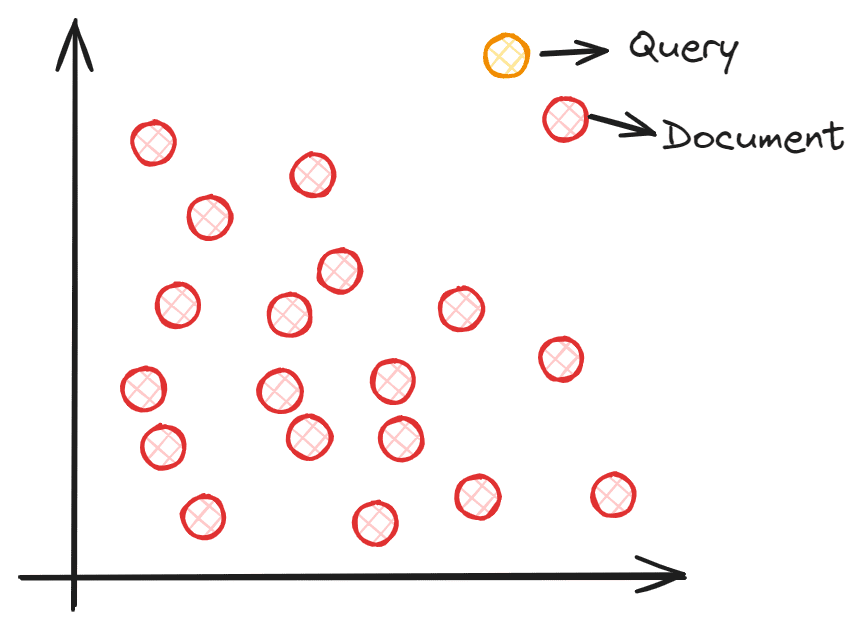

So, how does semantic search work with vectors? A semantic search goals to embed your corpus right into a vector area. This permits every knowledge level to offer info (textual content, sentence, paperwork, and so forth.) and turn into a coordinate level. The question enter is processed right into a vector by way of embedding into the identical vector area throughout search time. We’d discover the closest embedding from our corpus to the question enter utilizing vector similarity measures akin to Cosine similarities. To know higher, you possibly can see the picture beneath.

Picture by Writer

Every doc embedding coordinate is positioned within the vector area, and the question embedding is positioned within the vector area. The closest doc to the question could be chosen because it theoretically has the closest semantic that means to the enter.

Nevertheless, sustaining the vector area that comprises all of the coordinates could be a large activity, particularly with a bigger corpus. The Vector database is preferable for storing the vector as a substitute of getting the entire vector area because it permits higher vector calculation and might keep effectivity as the information grows.

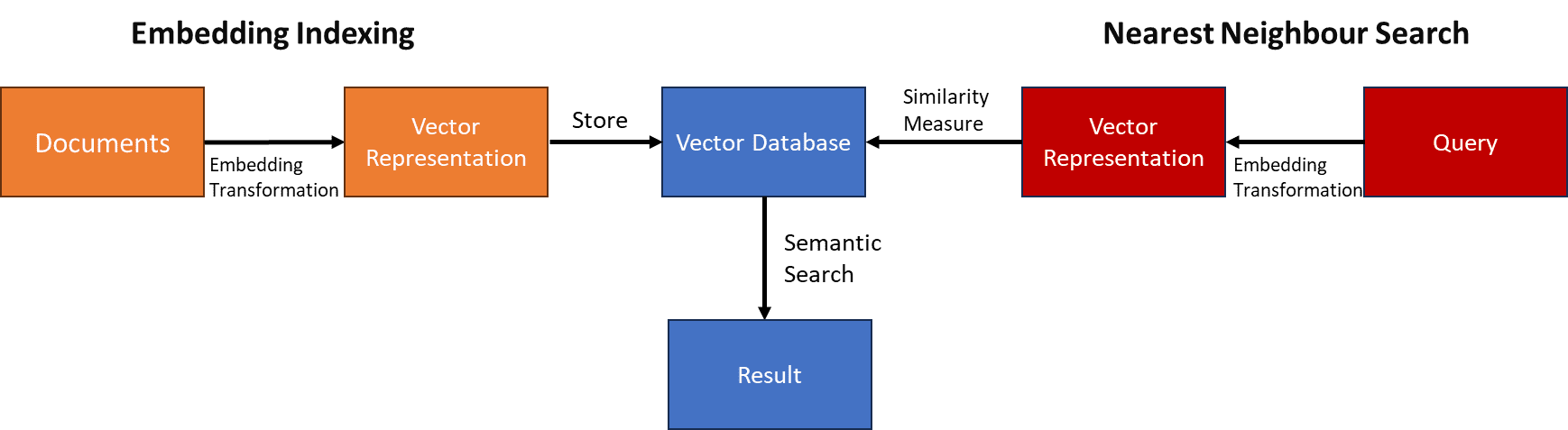

The high-level means of Semantic Search with Vector Databases could be seen within the picture beneath.

Picture by Writer

Within the subsequent part, we’ll carry out a semantic search with a Python instance.

On this article, we’ll use an open-source Vector Database Weaviate. For tutorial functions, we additionally use Weaviate Cloud Service (WCS) to retailer our vector.

First, we have to set up the Weavieate Python Bundle.

pip set up weaviate-client

Then, please register for his or her free cluster by way of Weaviate Console and safe each the Cluster URL and the API Key.

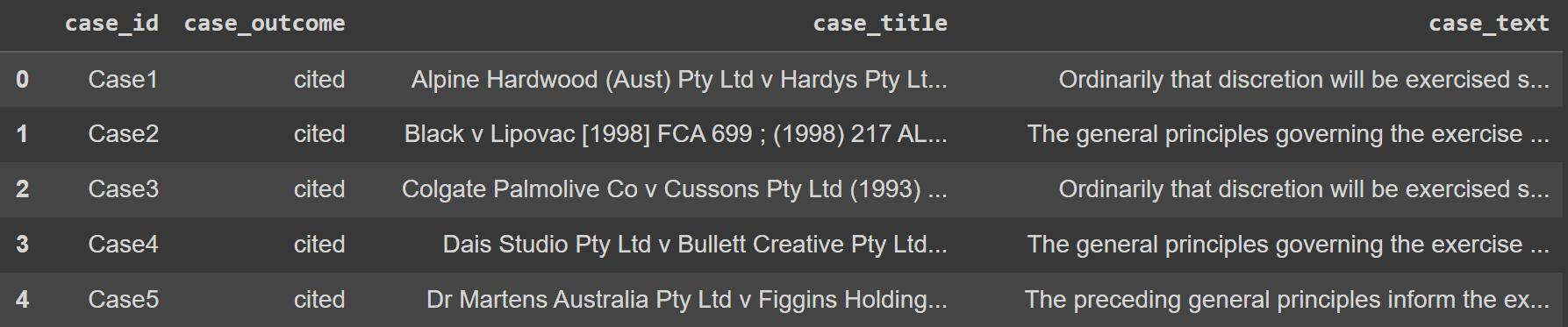

As for the dataset instance, we might use the Authorized Textual content knowledge from Kaggle. To make issues simpler, we might additionally solely use the highest 100 rows of knowledge.

import pandas as pd

knowledge = pd.read_csv('legal_text_classification.csv', nrows = 100)

Picture by Writer

Subsequent, we might retailer all the information within the Vector Databases on Weaviate Cloud Service. To try this, we have to set the connection to the database.

import weaviate

import os

import requests

import json

cluster_url = "YOUR_CLUSTER_URL"

wcs_api_key = "YOUR_WCS_API_KEY"

Openai_api_key ="YOUR_OPENAI_API_KEY"

shopper = weaviate.connect_to_wcs(

cluster_url=cluster_url,

auth_credentials=weaviate.auth.AuthApiKey(wcs_api_key),

headers={

"X-OpenAI-Api-Key": openai_api_key

}

)

The following factor we have to do is hook up with the Weaviate Cloud Service and create a category (like Desk in SQL) to retailer all of the textual content knowledge.

import weaviate.lessons as wvc

shopper.join()

legal_cases = shopper.collections.create(

title="LegalCases",

vectorizer_config=wvc.config.Configure.Vectorizer.text2vec_openai(),

generative_config=wvc.config.Configure.Generative.openai()

)

Within the code above, we create a LegalCases class that makes use of the OpenAI Embedding mannequin. Within the background, no matter textual content object we might retailer within the LegalCases class would undergo the OpenAI Embedding mannequin and be saved because the embedding vector.

Let’s attempt to retailer the Authorized textual content knowledge in a vector database. To try this, you need to use the next code.

sent_to_vdb = knowledge.to_dict(orient="records")

legal_cases.knowledge.insert_many(sent_to_vdb)

It’s best to see within the Weaviate Cluster that your Authorized textual content is already saved there.

With the Vector Database prepared, let’s strive the Semantic Search. Weaviate API makes it simpler, as proven within the code beneath. Within the instance beneath, we’ll attempt to discover the instances that occur in Australia.

response = legal_cases.question.near_text(

question="Cases in Australia",

restrict=2

)

for i in vary(len(response.objects)):

print(response.objects[i].properties)

The result’s proven beneath.

{'case_title': 'Castlemaine Tooheys Ltd v South Australia [1986] HCA 58 ; (1986) 161 CLR 148', 'case_id': 'Case11', 'case_text': 'Hexal Australia Pty Ltd v Roche Therapeutics Inc (2005) 66 IPR 325, the chance of irreparable hurt was regarded by Stone J as, certainly, a separate component that needed to be established by an applicant for an interlocutory injunction. Her Honour cited the well-known passage from the judgment of Mason ACJ in Castlemaine Tooheys Ltd v South Australia [1986] HCA 58 ; (1986) 161 CLR 148 (at 153) as assist for that proposition.', 'case_outcome': 'cited'}

{'case_title': 'Deputy Commissioner of Taxation v ACN 080 122 587 Pty Ltd [2005] NSWSC 1247', 'case_id': 'Case97', 'case_text': 'each propositions are of some novelty in circumstances akin to the current, counsel is right in submitting that there's some assist to be derived from the selections of Younger CJ in Eq in Deputy Commissioner of Taxation v ACN 080 122 587 Pty Ltd [2005] NSWSC 1247 and Austin J in Re Currabubula Holdings Pty Ltd (in liq); Ex parte Lord (2004) 48 ACSR 734; (2004) 22 ACLC 858, at the very least as far as standing is worried.', 'case_outcome': 'cited'}

As you possibly can see, we’ve got two completely different outcomes. Within the first case, the phrase “Australia” was straight talked about within the doc so it’s simpler to seek out. Nevertheless, the second end result didn’t have any phrase “Australia” wherever. Nevertheless, Semantic Search can discover it as a result of there are phrases associated to the phrase “Australia” akin to “NSWSC” which stands for New South Wales Supreme Court docket, or the phrase “Currabubula” which is the village in Australia.

Conventional lexical matching may miss the second file, however the semantic search is way more correct because it takes under consideration the doc meanings.

That’s all the straightforward Semantic Search with Vector Database implementation.

Engines like google have dominated info acquisition on the web though the normal technique with lexical match comprises a flaw, which is that it fails to seize consumer intent. This limitation provides rise to the Semantic Search, a search engine technique that may interpret the that means of doc queries. Enhanced with vector databases, semantic search functionality is much more environment friendly.

On this article, we’ve got explored how Semantic Search works and hands-on Python implementation with Open-Supply Weaviate Vector Databases. I hope it helps!

Cornellius Yudha Wijaya is an information science assistant supervisor and knowledge author. Whereas working full-time at Allianz Indonesia, he likes to share Python and knowledge suggestions by way of social media and writing media. Cornellius writes on a wide range of AI and machine studying matters.